Back to selection

Back to selection

Culture Hacker

Bridging the gap between storytelling and technology. by Lance Weiler

Listen as Your Story Talks to the Internet

Without a doubt, this is an amazing time to be a storyteller. We have moved beyond the simple democratization of storytelling and production tools. Funding, marketing and distribution solutions are commoditized, providing filmmakers numerous opportunities to bring their work to an audience. And now a new phase is arriving, one that merges technology with the creative process. Filmmakers will soon be able to take advantage of a world of connected objects in what has been termed the “Internet of things.” And in this environment, as always, there will be a need for good storytelling to provide a level of understanding, entertainment and social value.

Prior to sitting down to write this column, I made a $165 contribution to a Kickstarter campaign to preorder a tiny sensor called Twine. I’ve contributed to many Kickstarter and IndieGoGo projects over the last few years, but none have captured my imagination like Twine. I’m not alone in my fascination; initially, the company was trying to raise $35,000 but in the end pulled in almost $400,000. The reason is simple: Twine is realizing the promise of the “Internet of things.” It is part of a recent wave of DIY technology solutions that take advantage of inexpensive sensors, faster processing speeds and connectivity to meld the physical world with the Internet. Started by two MIT lab graduates, Twine is a way for you to “listen to your world, talk to the Internet.” Physical actions can trigger a variety of events online and vice versa. Twine is a motion sensor that is controllable with a simple Web interface.

Example: You place a motion sensor on your front door. When someone knocks, the action triggers snapping a photograph, which is then tagged with “someone at front door” and automatically sent out via a Tweet or Facebook post.

You might be wondering what that has to do with storytelling. Well, the “Internet of things” points to a path for connected interactions. Within a few years, most things — from cars to appliances to toys — will be able to wirelessly interface with the Internet. Think of them as objects in search of a story. And these connected objects won’t just be brands or consumer items. You will also be able to add connectivity to your own objects, such as props, locations or even your own merchandise.

Consider this…

1. Real world actions can unlock or trigger story assets such as audio, video or images on a website or mobile application.

2. An interaction on a website or mobile application can trigger an action in the real world in terms of a notification or story event.

Mark Harris (filmmaker and senior developer at Broadcastr) and I experimented with the “Internet of things” at Sundance a year ago when we launched Pandemic 1.0. Within the design, we left room for online and real world actions to impact the narrative flow. We built a “Contextual Storytelling” engine that utilized an algorithm to measure Tweets, check-ins, blog comments, search terms, as well as tracking the discovery of hidden objects throughout Park City with NFC (near field communication) technology. The spread of the pandemic and the pacing of the story were directly controlled by participants’ interactions. In the end, the project captured over a million data points and made use of more than 50,000 photographs. Afterwards, the data captured enabled the 120-hour experience to be re-playable. For instance, we could play the five days back in a matter of minutes if we sped everything up, or we could stretch it out over a month.

Much of the infrastructure for creating seamless stories across connected devices is still being shaped. Solutions are often hacked together on an as-needed basis. One can already see how motion sensors, NFC and other real-world connected monitoring technologies are revolutionizing water conservation, health care, food distribution, utility grids and transportation management. NFC itself holds huge potential, as it will be at the center of a $1.4 trillion mobile transaction industry. Over the next year, banking institutions, credit card companies, telcos and major brands will battle it out to establish digital wallets in an effort to provide consumers with simple methods of payments for goods and services.

Historically, where technology goes, storytelling follows. This has been the case with production and distribution technologies. But now we are experiencing a shift; the ability to creatively embed stories within the real world will influence the next generation of social applications.

What does this mean to the average filmmaker? Well, Experience Designers will become the film directors of the 21st century, weaving emotionally engaging tales that connect audiences to each other and the world around them. Where the last decade was all about search, this decade is focused on curation. Stories will lead to purchases of goods and services, while providing a residual to the storyteller who originated them. A simple example of a current model can be seen within the mobile music app, Shazam, which enables the user to discover and purchase music while taking a cut for each song bought.

As connected devices and services continue to develop, filmmakers will be able to place a story layer over the real world. Inanimate objects and physical locations will become an opportunity to extend stories and engage audiences in ways that propel 21st-century storytelling.

Major festivals are embracing new opportunities to extend stories beyond the screen. This past fall, the Sundance Institute launched the New Frontier Storytelling Lab to support emerging forms of storytelling. A natural extension of the festival, the New Frontier Lab attracts multidisciplinary storytellers, technologists and game developers while putting the focus on story.

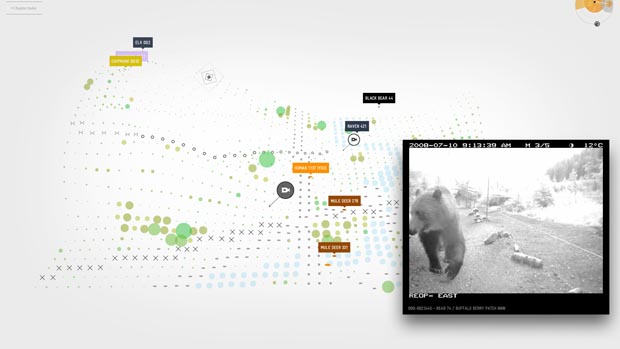

An interesting project premiering in the New Frontier section of this year’s festival is Bear 71. As the Sundance site describes, “Jeremy Mendes and Leanne Allison’s poignant interactive documentary about a bear in the Canadian Rockies illuminates the way humans engage with wildlife in the age of networks, satellites and digital surveillance. Audiences from around the world can use their smartphones to become part of an interactive forest environment rich with bears, cougars, sheep, deer and people as they follow an emotional story of a grizzly bear tagged and monitored by Banff National Park rangers.”

Along with the NFB, I’ve been working with the creative team of Bear 71 to develop an installation that brings the themes and emotional core of the project to life. Together, we have shaped a multi-user interactive experience that takes place within the New Frontier section of the festival as well as select locations around Park City. At its core is a unique experience that harnesses facial detection software, augmented reality, motion sensors, wireless trail cams, QR codes, projection and data visualization. Festival-goers become animals who are tracked within the storyworld of Bear 71 and through a special extension of this column, you too can also roam Park City.

(IF YOU HAVE THE MAGAZINE) Look at page 17 to discover what type of animal you are and click here to experience Bear 71. For those of you who are in Park City, be sure to take this column with you to the New Frontier section of the festival to unlock a special part of the story.